The equilibrium point (above ambient) depends linearly on total power. So the temperature asymptotically approaches equilibrium, just like in an RC circuit. The thermal mass of the chip + heat sink is like a capacitor, the thermal connection from chip to air is like a resistor, and the constant heat power input is like current.

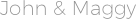

A higher temperature difference (between chip and heat sink, and between heat sink and air) means more heat transfer per time (aka power). I don't see what prevents the temperature from continuing to rise. And the rear case fan literally stops when CPU / mobo temps are below ~40C, as I configured it in the BIOS. My cooler is a CoolerMaster Gemini II, big clunky thing with heat pipes and a big fan that (at room temp) barely turns, so mine has more thermal mass than a stock cooler. Of course the "k" models are overclockable so the stock turbo settings are conservative, but I like to keep my fans quiet, not have a burst of fan spin-up sound when a clunky web-page loads.

(And that's not really limited by overal thermals, more just how fast the transistors are and / or not creating a hot-spot on the one core that's active.)

Or maybe your CPU's max all-core turbo isn't any higher than its rated speed. Prime95 or video encoding.)Įven Intel's stock cooler typically has enough cooling capacity to sustain some turbo with all cores busy on a lot of workloads, staying below sustained TDP. To make enough heat to make turbo not sustainable, you need to run SIMD FMAs or something similarly high-power, not just a dummy loop. If you mean throttling (down from max all-core turbo if that's higher than the rated / "guaranteed" sustained frequency), that depends on workload. There is no frequency scaling to drop the frequency (like DVFS) I mean that once it reaches its high performance speed it stays there (3.1GHz). Clock speed decisions are made in hardware) (Intel since Skylake has hardware power management so it can ramp up from idle clocks in micro-seconds, not milliseconds. On my i7-6700k (Skylake quad-core desktop), starting a high-power process like video encoding (x264 or x265) will ramp the cores up from ~25C idle (room temp) to ~50 or 60C within a second, then they quickly settles near 70C or so, depending on max all-core turbo of 3.9 or 4.0GHz via energy_performance_preference. There isn't much thermal mass in the chip + heat sink, compared to the power that flows through it when it's > 50C above ambient, so it quickly reaches equilibrium. Any help understanding this would be greatly appreciated. This is on an Intel Core i7 Skylake architecture. I ran the same experiment on an RPi and it took the fully loaded quad core about 60 seconds before frequency scaling set in, so I have no idea whats happening now that I am trying to bring the project to a more complex architecture. The temperature basically spikes instantaneously, doesn't change while the jobs are running, and the vanishes as soon as the job finishes. Is this expected behavior? Is it physically possible for the temperatures sensors to see that much change this quickly? If so, I'm in trouble in terms of characterizing temperature changes. Stress: info: dispatching hogs: 8 cpu, 0 io, 0 vm, 0 hdd Here is a little shell script and output to demonstrate the problem: The problem I am seeing is that as soon as stress starts the temperature skyrockets, and as soon as it stops it plummets. lm-sensors for measuring core and package temperature.I am attempting to use two tools to do this: I need to monitor how CPU core temperature changes over time. I am attempting to do some data science with CPU core temperatures.